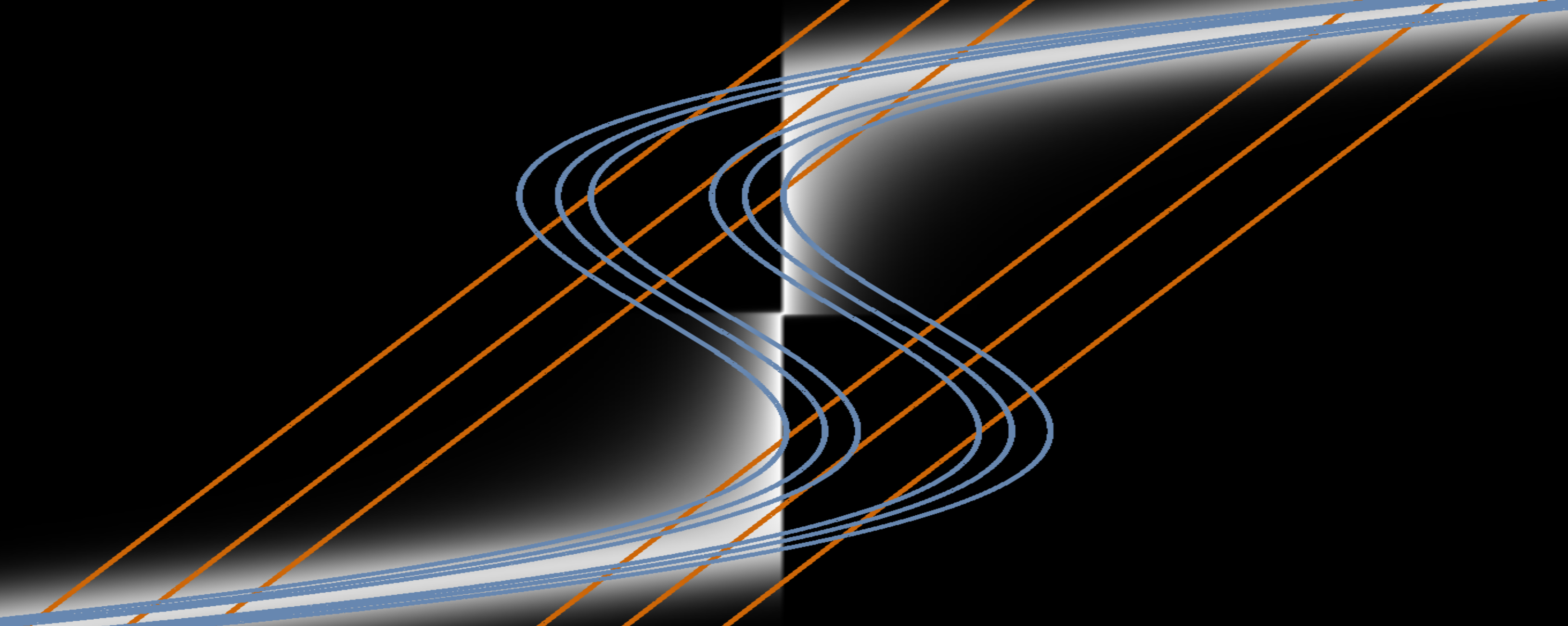

Approximations of the state distribution for a nonlinear filtering problem by different variants of the Gaussian Filter (GF). The white density represents the true posterior distribution of the state given the measurement. The orange contour lines show the approximation made by the standard GF. The blue contour lines show that the approximation can be made much more accurate by an extension of the GF developed in this project.

Decision making requires knowledge of some variables of interest. In the vast majority of real-world problems, these variables are latent, i.e. they cannot be observed directly and must be inferred from available measurements. To maintain an up-to-date distribution over the latent variables, past beliefs have to be fused continuously with incoming measurements, taking into account the dynamics of the underlying process. This procedure is called filtering and it has numerous applications in diverse fields such as robotics, communication, and econometrics.

The Gaussian Filter (GF) is one of the most widely used filtering algorithms; instances are the Extended Kalman Filter, the Unscented Kalman Filter, and the Divided Difference Filter. However, it has limitations which are prohibitive for certain applications:

- The dependency between the state and the measurement is approximated by a linear function (see orange contour lines in the above graph). This is problematic for highly nonlinear systems and can even lead to complete failure.

- The GF is very sensitive to outliers.

- The computational cost is large for high-dimensional states and measurements.

To address these problems, we view the GF from a variational-inference perspective. We regard the Gaussian filtering equations as an optimal approximation to exact inference in nonlinear systems [ ] [ ].

On this basis, we work on extensions of the GF which allow it to capture the dependencies in nonlinear systems more accurately, e.g. [ ]. Furthermore, we investigate how the structure of certain dynamical systems can be exploited to increase computational efficiency.