We are interested in learning and control in stochastic and hybrid dynamical systems such as in controlling robots.

We are interested in data-driven approaches to optimal and robust control, with applications robotics. To tackle various challenges arisen in complex systems, we combine optimal control -- a principled way of decision-making and control, with reinforcement learning for control designs in robotic systems. This on-going project currently has the following focus areas.

Reinforcement learning (approximate dynamic programming) for robust resource-aware control. Real-world feedback control is typically resource-heavy. We are interested in controlling systems with limited resources while achieving successes in control tasks. In the heart of the approach, we use a deterministic policy gradient to design robust controllers without knowledge a priori. The resulting control design achieves model-free control with significant resource saving.

The first publication appeared in the proceedings of 2018 IEEE Conference on Decision and Control (CDC).

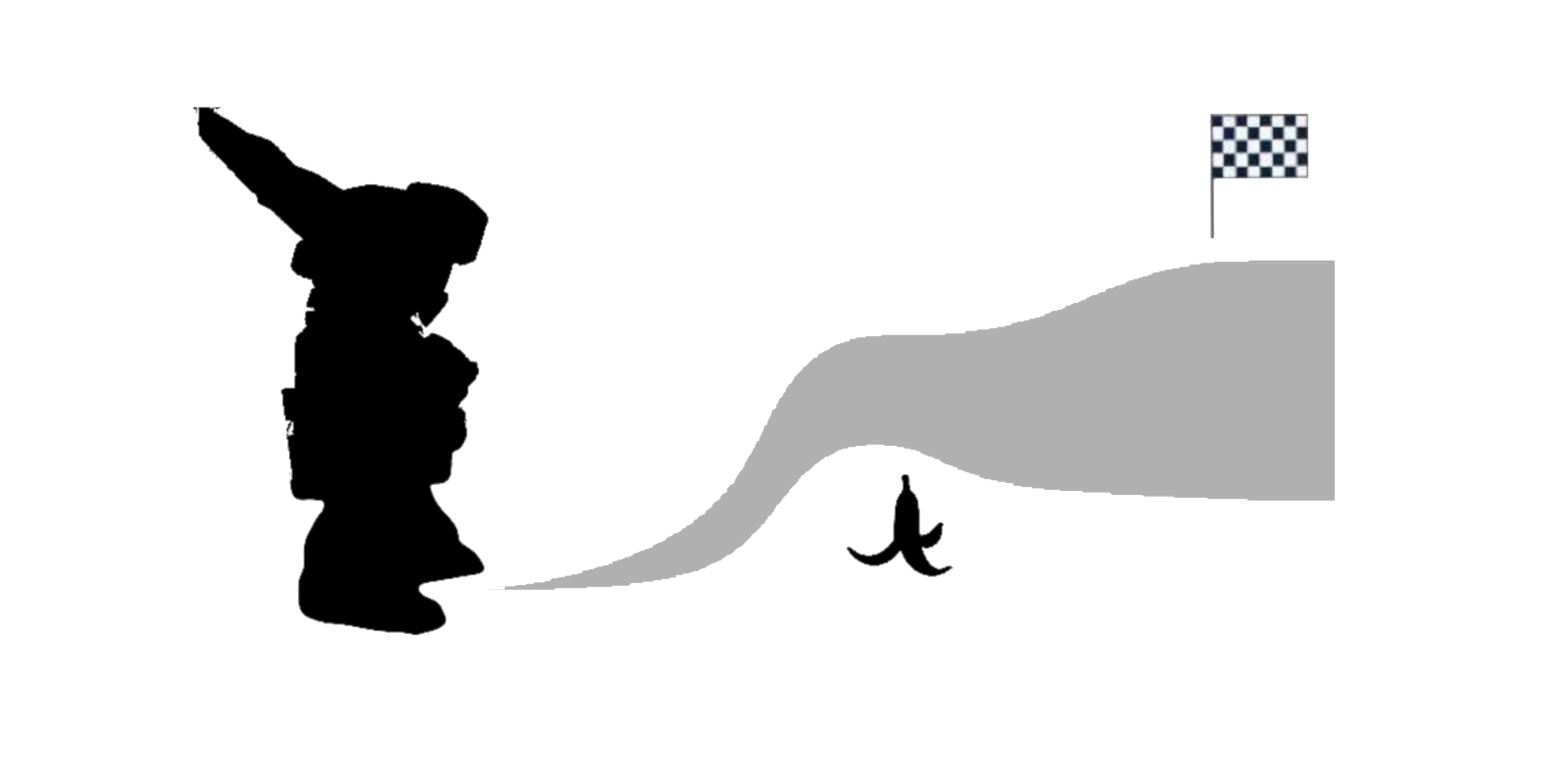

The second focus area is control design in hybrid systems. Hybrid systems consist of continuous dynamical systems and discrete events. They can be used to model real-world systems well, such as in legged locomotion of robots. We apply optimal control (e.g. trajectory optimization, model predictive control) and probabilistic inference for planning and control under uncertainty. The goal of the project is robust control design that can plan and control under uncertain hybrid systems, such as robotic contact models.