2021

Doerr, A.

Models for Data-Efficient Reinforcement Learning on Real-World Applications

University of Stuttgart, Stuttgart, October 2021 (phdthesis)

2020

Baumann, D.

Learning and Control Strategies for Cyber-physical Systems: From Wireless Control over Deep Reinforcement Learning to Causal Identification

KTH Royal Institute of Technology, Stockholm, Sweden, December 2020 (phdthesis)

Marco-Valle, A.

Bayesian Optimization in Robot Learning - Automatic Controller Tuning and Sample-Efficient Methods

Eberhard Karls Universität Tübingen, Tübingen, July 2020 (phdthesis)

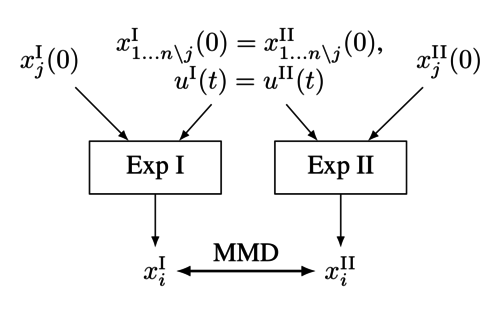

Baumann, D., Solowjow, F., Johansson, K. H., Trimpe, S.

Identifying Causal Structure in Dynamical Systems

2020 (techreport)

2019

Baumann, D.

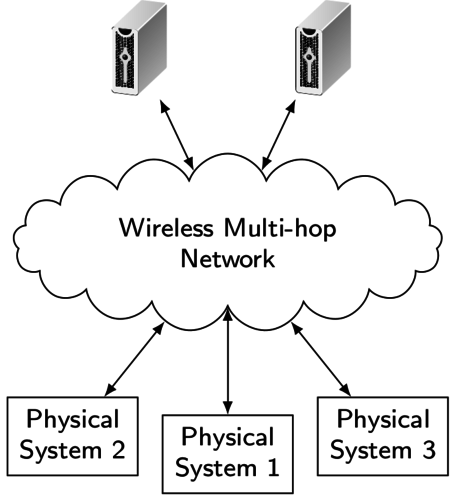

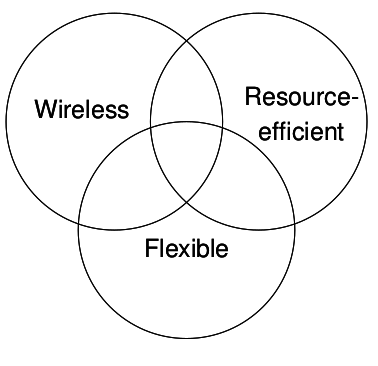

Fast and Resource-Efficient Control of Wireless Cyber-Physical Systems

KTH Royal Institute of Technology, Stockholm, February 2019 (phdthesis)

2016

Ebner, S., Trimpe, S.

Supplemental material for ’Communication Rate Analysis for Event-based State Estimation’

Max Planck Institute for Intelligent Systems, January 2016 (techreport)

2015

Trimpe, S.

Distributed Event-based State Estimation

Max Planck Institute for Intelligent Systems, November 2015 (techreport)

Marco, A.

Gaussian Process Optimization for Self-Tuning Control

Polytechnic University of Catalonia (BarcelonaTech), October 2015 (mastersthesis)

Doerr, A.

Adaptive and Learning Concepts in Hydraulic Force Control

University of Stuttgart, September 2015 (mastersthesis)

Trimpe, S.

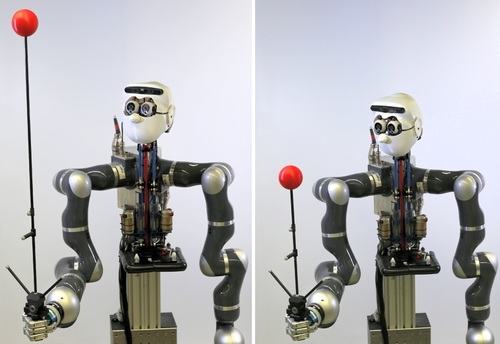

Lernende Roboter

In Jahrbuch der Max-Planck-Gesellschaft, Max Planck Society, May 2015, (popular science article in German) (inbook)

Doerr, A.

Policy Search for Imitation Learning

University of Stuttgart, January 2015 (thesis)

2013

Trimpe, S.

Distributed and Event-based State Estimation and Control

ETH Zurich, 2013 (phdthesis)